Generative Modeling with Flow-Guided Density Ratio Learning, Alvin Heng★, Abdul Fatir Ansari★, and Harold Soh★, Joint European Conference on Machine Learning and Knowledge Discovery in Databases

Links:

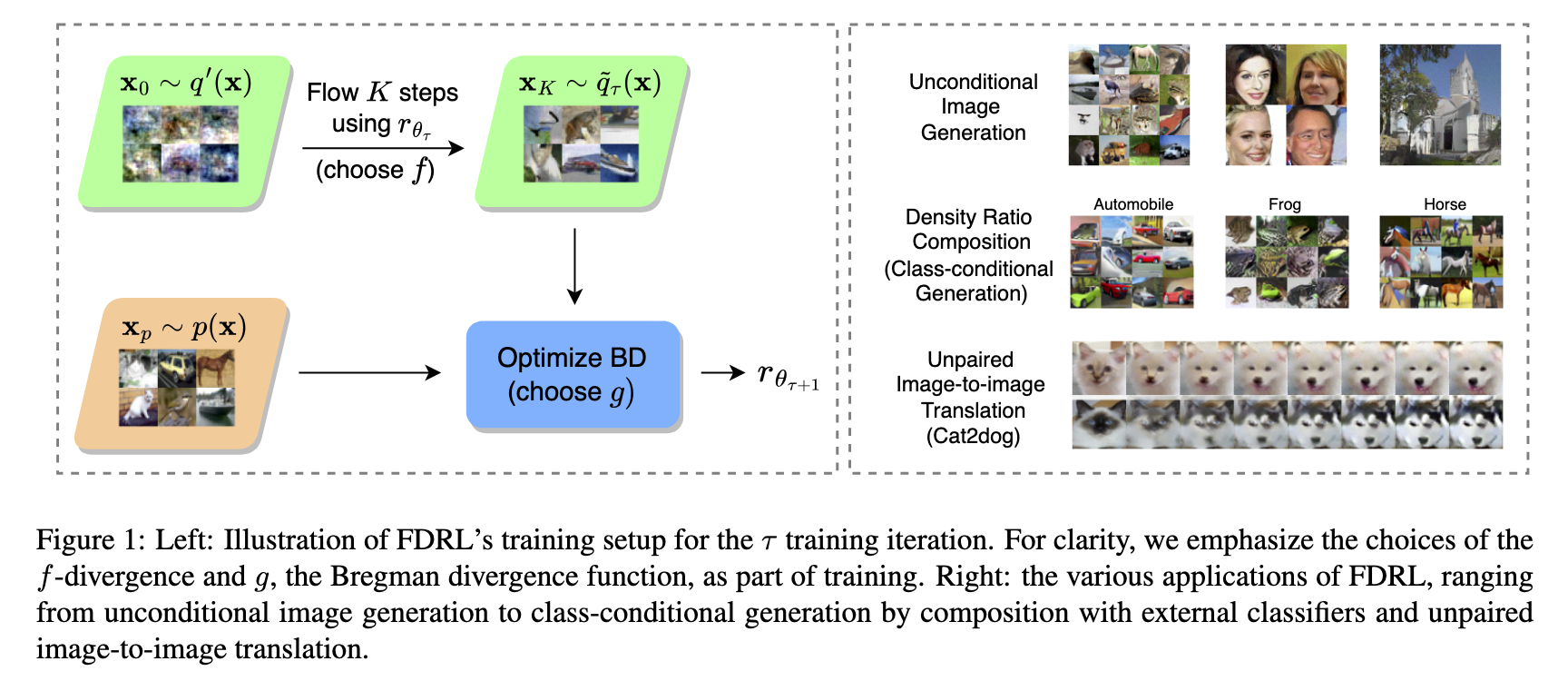

We present Flow-Guided Density Ratio Learning (FDRL), a simple and scalable approach to generative modeling which builds on the stale (time-independent) approximation of the gradient flow of entropy-regularized f-divergences introduced in DGflow. In DGflow, the intractable time-dependent density ratio is approximated by a stale estimator given by a GAN discriminator. This is sufficient in the case of sample refinement, where the source and target distributions of the flow are close to each other. However, this assumption is invalid for generation and a naive application of the stale estimator fails due to the large chasm between the two distributions.

FDRL proposes to train a density ratio estimator such that it learns from progressively improving samples during the training process. We show that this simple method alleviates the density chasm problem, allowing FDRL to generate images of dimensions as high as 128×128, as well as outperform existing gradient flow baselines on quantitative benchmarks. We also show the flexibility of FDRL with two use cases. First, unconditional FDRL can be easily composed with external classifiers to perform class-conditional generation. Second, FDRL can be directly applied to unpaired image-to-image translation with no modifications needed to the framework.

Resources

You can find our paper here. Check out our repository here on github.

Citation

Please consider citing our paper if you build upon our results and ideas.

Alvin Heng★, Abdul Fatir Ansari★, and Harold Soh★, “Generative Modeling with Flow-Guided Density Ratio Learning”, Joint European Conference on Machine Learning and Knowledge Discovery in Databases

@inproceedings{heng2024generative,

title={Generative Modeling with Flow-Guided Density Ratio Learning},

author={Heng, Alvin and Ansari, Abdul Fatir and Soh, Harold},

booktitle={Joint European Conference on Machine Learning and Knowledge Discovery in Databases},

pages={250--267},

year={2024},

organization={Springer} }

}

Contact

If you have questions or comments, please contact Alvin or Harold.